AI turned the whole tech industry into benchmarking addicts.

Benchmarking is nothing new to me, I've seen it used both as a sales and marketing tool and as part of the engineering process. But the scale and the obsession that people got into it with AI is on a completely different level.

I've been building data infrastructure for more than 10 years now and most recently I've been building agentic systems for data and platform engineers at Typedef. To do that reliably, I had to build my own internal eval system because nothing off the shelf could evaluate what we were building.

I've also seen benchmarketing 1 2 3 4, benchmarking that turned into vendor warfare, but nothing compared to what is happening today with AI. The noise is so bad that it's reasonable people have started losing faith in the performance claims they see out there.

People are not crazy though, there is a good reason for all the interest in these benchmarks and evals and it's not that different compared to why benchmarks have been around for so long in the database world.

There's a lot to learn from that. What I'll do here is walk you through the history of how the database industry dealt with the exact same problem, show you why the same patterns are repeating in AI at an even greater scale and make the case of why you can and you should build your own evals to due diligence the vendors you are interacting with.

By the end you will have a playbook for turning vendor benchmarks from their marketing tool into your due diligence tool!

The problem

There's pressure to deliver the promises of AI through your team. It's 2026, we've been promised a brand new world of unparalleled performance by incorporating AI in everything and as the person responsible for your data team's tooling, you're the one who has to figure out which of these promises are real.

It's not only pressure from above though, it's also pressure from peers. Look at all these product engineers, how they churn out new front-end features in 1/10 the time of before and they do it by using these silly looking terminal tools like Claude Code. How can we do the same as data practitioners?

We had projects to deliver agentic analytics, that would actually put the data org in an amazing position when delivered. Imagine every business user being able to answer any question they have on Slack without having to open a ticket and wait a few engineering cycles before they could get a dashboard.

But it seems that it's safe to hallucinate code because there's a compiler and then also an engineer to figure out the issue before it hits production but you can't afford to hallucinate business metrics and these models when left unsupervised on a realistic data warehouse to run arbitrary SQL, do hallucinate a lot 5 6!

But wait, we can go and use semantic layers instead of raw SQL, this will solve the problem! And there's evidence that it helps significantly 7 8. Well, in theory yes but in practice the agent will be as good as your data modeling on another layer of abstraction.

But regardless of the approach, the benchmarks and evals that vendors use to make their case were never designed for your workload.

Now we have something that is not designed for your needs, used to prove to you that a tool is going to work well for you, and guess what, it might convince you to buy and then you will face the hard reality of the tool not delivering what it was promised.

The issue is even more exaggerated in data platforms and AI because there's a complete void there. The benchmarks just do not exist today, although this is slowly changing as we will see a bit later.

But even if the benchmarks were perfect, there's an important learning from the decades of using benchmarks in databases for evaluating vendors.

Sure, the latest frontier model can impress Dr. Knuth 9 on assisting on a proof, but the same model when faced with your pipelines that broke because a salesforce admin changed the currency of a column without letting you know, will end up in existential crisis.

The problem is that today, someone will use the former to convince you to use LLMs to turn your team into 10x data engineers but will most probably avoid mentioning that the latter can also happen.

We can learn from the past though, and I'm here to show you how!

But first, let's do a small history lesson.

The benchmarketing wars of the database vendors

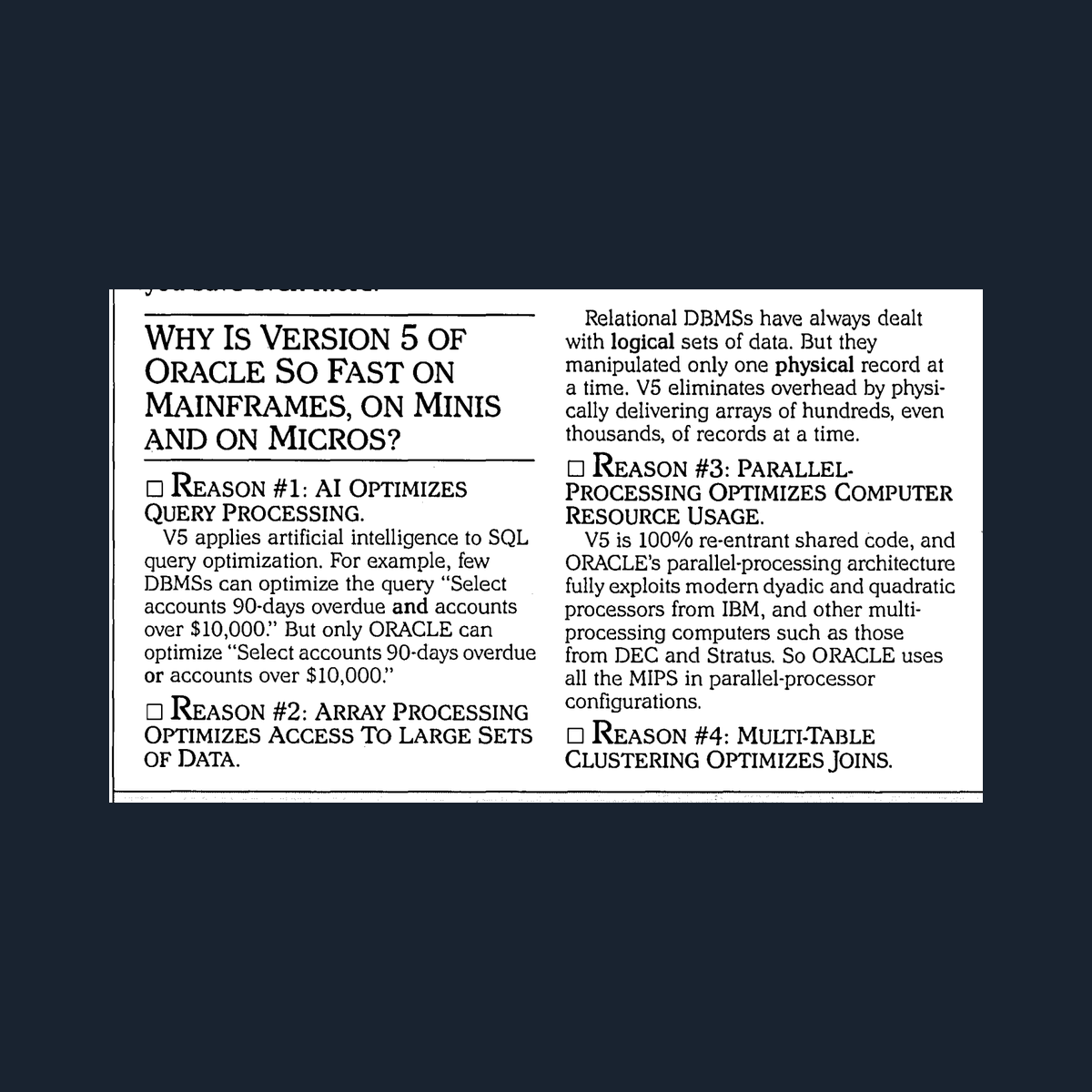

In the early to mid 1980s, the database market exploded from IBM mainframe dominance into a crowded field of competing vendors like Oracle, Sybase and others who all run on different hardware 10. Each of these vendors started publishing their own performance numbers using self-designed tests optimized to make their system look best. Does this remind you of anything?

It's not like there weren't emergent "canonical" cases for benchmarks though. For example a pretty standard one was the "DebitCredit benchmark", coming not surprisingly, from a 1985 paper 11. It was a simple banking transaction. Debit an account, credit another and finally log it. Vendors loved it because it was easy to run and easy to communicate to customers.

But then, they started gaming it. Here's a few ways that this happened.

- Running on unrealistic hardware configurations

- Omitting costs

- Tweaking configurations specifically for the benchmark workload

- Publishing numbers without disclosing how they got them

Jim Gray called the above "benchmarketing" 11 as the process of using benchmarks as marketing weapons rather than engineering tools. This practice got so bad that the numbers vendors were publishing were essentially meaningless for actual purchasing decisions.

The industry's answer to this problem was the formation of TPC in 1988 by 34 vendors who agreed to standardize benchmarks with mandatory full disclosure reports, ACID compliance requirements and realistic pricing rules.

This sounds as a great solution to the problem and TPC did try to be the solution but regardless of its best efforts, the system gradually eroded.

Membership collapsed. From 54 member companies in 1995 to 21 by 2022.

Vendors started cherry-picking. For example, instead of running full TPC benchmarks, vendors would take subsets of TPC queries and publish results without the auditing process.

At the end, the very problem TPC was created to solve (benchmarketing) re-emerged through the cracks. Vendors found ways to use TPC's credibility while avoiding its constraints.

Sound familiar? The Databricks vs Snowflake benchmarketing war linked in the footnotes is a perfect modern example of this exact dynamic playing out.

Jim Gray made it clear in his work that no single benchmark can measure performance across all application types. His four criteria for good benchmarks include relevance, which means that the benchmark must measure something the customer actually cares about. For example, TPC-H models a specific workload, ad-hoc analytics on a star schema. If your workload doesn't look like that then TPC-H means nothing to you and can't inform your decision of using one system over the other.

In practice, what happened in database procurement for decades was a two-stage process:

- Stage 1: Use TPC as a filter. Use it to narrow the field and ensure that a standard set of capabilities are supported by the systems in question. If some of the queries fail for example, the system probably doesn't even support some functionality so it's not even about performance and cost yet.

- Stage 2: Use your workload as the decision. Take the vendors from the output of stage 1, load your schema, your sample data and run your queries with all the gory edge cases that matter to you and see what happens.

It's hard to compress 40 years of tech industry evolution in a few lines but this is more or less what happened.

Now let's see how the same playbook is running again in AI, except this time everything is turned up to eleven 12.

The same patterns repeat in AI, in greater scale

In today's AI landscape, vendors are trying to control the narrative in ways that would look very familiar to anyone who lived through the database benchmarketing wars.

They publish leaderboard results on benchmarks they optimized for, they cherry pick results by highlighting the benchmarks they perform better and ignore the rest and they use benchmarks that can also be considered toy examples that can hardly represent any realistic workload.

The results are the same, trust is eroding fast and practitioners stop trusting the numbers while at least for now, there's nothing better to replace them.

There are also some things that are even worse in AI compared to database benchmarks.

Model contamination is something that didn't exist in databases. LLM models get trained on massive datasets that many times contain also the benchmarks resulting in models that "memorize" the benchmarks 13. Even when contamination isn't the issue, something as trivial as changing the formatting of a benchmark question can swing accuracy by around 5% 14. The scores aren't just gamed, they're structurally fragile.

We don't have yet any neutral third party body equivalent to TPC, which never managed to be perfect but it was definitely better than what existed before. The best things we have today are primarily coming from academia safeguarding the neutrality of the benchmarks but with the issue of not being aligned to the needs of the industry as we mentioned earlier.

Why you can and you should build your own evals

Drawing inspiration from the database world again. No one in the past four decades of benchmarketing had hired dedicated benchmarking teams to figure out if Postgres is better than MySQL. What they did instead, is that they created a sample workload from their real production environment, loading their own data, running their own queries and exposing their own table statistics to see what happened. Instead of treating this as a research project they approached it as due diligence and this is exactly the right way to do it in AI too.

You don't need to design a benchmark from scratch, you don't need to build the harness and you don't need to come up with the metrics and measurements either. What you need is create a sample workload, representative of the tasks you are dealing with in your own environment, ideally focusing on the problems you most urgently want to see fixed in your organization.

By doing that, you change the dynamics of the conversations with the vendors you are dealing with. Instead of using their curated information to inform your decisions, now you can filter them based on the real needs that you have.

The alternative is what happens to teams that skip this step. You pick a tool based on a leaderboard score and a polished demo, spend months integrating it, and then discover it can't handle the things that actually matter in your environment. By that point you've burned budget, lost time and the credibility you need to make the next investment case to your leadership.

You might wonder now how this is possible without a harness and metrics and all the fancy stuff that make up an AI/LLM eval system.

I have good news for you, the infrastructure to do this already exists and it's what the vendors are already using to engage in benchmarketing.

How to do it

Remember how TPC was used as a baseline in the database world? We can do something similar in AI. We will start with an eval system that is being built specifically for AI data platform tasks and it's one that is already being used by vendors15 16 to claim their superiority. This system is called ADE-BENCH17.

ADE-BENCH is an open benchmark led primarily by dbt Labs, designed to be vendor neutral. It covers the kinds of tasks data teams actually deal with, not just single text-to-SQL on toy schemas but multi-step work with plenty of dependencies that are represented with systems like dbt.

If you are a data leader you will be hearing about ADE-BENCH more and more as vendors will be trying to build their position in the market and prove the value they can deliver and as with TPC, you should definitely use whatever they share as their results on ADE-BENCH as a first filter to shortlist the vendors you want to consider.

But, you can also use ADE-BENCH as a way to perform due-diligence on the vendors you decide to consider further. ADE-BENCH is built to be extensible, this is primarily done so the community can contribute more tasks to it and make it more complete but this can also be used for you to create your own private tasks to benchmark AI vendors on the things that matter to you.

Your data engineers already know everything about your data infrastructure and with some help from something like Claude Code, they can easily end up with a set of ADE-BENCH tasks that are representative of your environment. In a summary here's what it takes to build new tasks.

First, you need of course to have some data infrastructure in place. Ideally a dbt project and a data warehouse. If you are reading this post, you most probably already have that!

Bugs to inject. This is where your data engineers equipped with Claude Code can do miracles. The DEs know the edge cases, know the most common headaches they are having and they can very effectively guide Claude Code to find the assets needed to be altered and figure out nice ways to break them.

ADE-BENCH has a specific scaffolding for tasks, again by just pointing Claude Code to the ADE-BENCH codebase, will make it a trivial task to generate the right task definitions.

Finally you need some seed CSVs for validation. Again, a DE together with Claude Code can do miracles here. Generating representative data sets that respect the distributions you have without including any PII or overall sensitive data.

This is a project that can be fun for data engineers to work on and can add tremendous value to your organization!

Let me show you now an example that will demonstrate why no generic benchmark will be able to capture the complexity of a real organization like yours.

Example: A Task No Generic Benchmark Can Test

The model: License deduplication across four data sources.

A B2B SaaS company sells both self-hosted and cloud subscriptions. License data comes from four independent systems: the billing platform (Stripe), a legacy internal database, the CRM (Salesforce), and product telemetry (self-reported by customer servers). A single dbt model consolidates all four into one record per license.

This model encodes decisions that only make sense if you understand this company's specific data architecture:

Source trust hierarchy:

sqlcoalesce(billing.starts_at, legacy.issued_at, crm.starts_at, telemetry.starts_at) as starts_at

Billing is most trusted, then legacy, then CRM, then telemetry. Note it uses legacy.issued_atand not legacy.starts_at, because in the legacy system, issued_at (when the license was created) is the correct start date. This distinction exists because the legacy system was built before the company had a formal license activation flow.

Hardcoded CRM exclusions:

sqland o.opportunity_id not in ( '0063p00000yaF7lAAE', '0063p00000xRa3LAAS', ... -- 10 specific IDs )

Known duplicates, test deals, and historical data issues. This is institutional knowledge, someone on the data team investigated each one and added it to the exclusion list.

Telemetry deduplication:

sqlhaving count(distinct licensed_seats) = 1 and count(distinct starts_at) = 1 and count(distinct expire_at) = 1

Self-reported license data is only trusted if a server reports consistent values across all reporting days. Ambiguous telemetry, where a server reports different seat counts on different days, is silently dropped.

License key validation:

sqland len(license_key) = 26

This company's license keys are exactly 26 characters. Anything else is malformed.

The bug

Change one column reference:

sql-- Before (correct) coalesce(billing.starts_at, legacy.issued_at, crm.starts_at, telemetry.starts_at) as starts_at -- After (buggy) coalesce(billing.starts_at, legacy.starts_at, crm.starts_at, telemetry.starts_at) as starts_at

The model still compiles. The output still has a starts_at column with dates. But for legacy licenses, the start date is now wrong, starts_at doesn't exist in the legacy source with the intended meaning, so the COALESCE falls through to CRM or telemetry data (or NULL). License start dates shift by weeks or months, silently corrupting downstream ARR calculations.

The agent prompt

Why this can't exist in a generic benchmark

To fix this, an agent must:

- Understand that this company has four independent license sources with different schemas and different reliability levels

- Know that the COALESCE order is a trust hierarchy, not arbitrary

- Realize that

issued_atandstarts_atmean different things in the legacy system specifically. A distinction that exists nowhere except in this code - Trace the downstream impact to revenue calculations

Generic benchmarks test SQL competence: can the agent fix a wrong JOIN, create a model from a spec, propagate a column rename? Those are necessary skills. But they don't tell you whether an agent can navigate your data architecture. The decisions your team made, the edge cases you handle, the institutional knowledge encoded in your models.

A generic benchmark answers: "Can this agent do dbt work?"

A custom benchmark answers: "Can this agent do dbt work on our project, the way our team does it?"

Conclusion

The database industry spent 40 years learning a lesson that boils down to two stages. Use a standardized benchmark to filter the field. Then use your own workload to make the decision. That's it. TPC-H was never the answer, it was the starting line. The finish line was always your data, your queries, your edge cases.

The AI world is learning the same lesson right now, except the noise is louder, the benchmarks are more fragile and the stakes for data teams are higher than ever. You don't have to wait 40 years to figure this out.

Start with ADE-BENCH as your baseline. Get your data engineers to build tasks that reflect your actual environment, the bugs they deal with, the business logic only they understand. And the next time a vendor shows you a leaderboard score or a polished demo, ask them a simple question: "Can you run it on ours?"

If you want to learn more about how we built our own eval system at Typedef and what we learned from the process, check it out here.

I'll be publishing more on this topic soon, including a hands-on guide for building your own ADE-BENCH tasks from scratch.

Finally, if you're navigating this exact problem or you've already started building your own evals, I'd love to hear from you reach out on LinkedIn or X.

If you liked this post, consider sharing it on Hacker News, X, or LinkedIn.

Footnotes

-

https://www.databricks.com/blog/2021/11/02/databricks-sets-official-data-warehousing-performance-record.html ↩

-

https://www.snowflake.com/en/blog/industry-benchmarks-and-competing-with-integrity/ ↩

-

https://www.databricks.com/blog/2021/11/15/snowflake-claims-similar-price-performance-to-databricks-but-not-so-fast.html ↩

-

https://siliconangle.com/2021/11/02/databricks-claims-warehouse-supremacy-benchmark-test-others-say-not-fast/ ↩

-

https://cloud.google.com/blog/products/data-analytics/looker-semantic-layer-for-gen-ai ↩

-

https://jimgray.azurewebsites.net/BenchmarkHandbook/chapter1.pdf ↩ ↩2

-

https://www.snowflake.com/en/blog/cortex-code-cli-expands-support/ ↩